AbstractWithout big data analytics, companies are blind and deaf, wandering out onto the web like deer on a freeway.

Geoffrey Moore

Organization Theorist and Author

The recent surge in big data technologies has left many executives, both of well-established organizations and emerging startups, wondering how best to harness big data. In particular, the analytics aspect of big data is enticing for both information technology (IT) service providers and non-IT firms because of its potential for high returns on investment, which have been heavily publicized, if not clearly demonstrated, by multiple whitepapers, webinars, and research surveys. Although executives may clearly perceive the benefits of big data analytics to their organizations, the path to the goal is not as clear or easy as it looks. And, it is not just the established organizations that have this challenge; even startups trying to take advantage of this big data analytics opportunity are facing the same problem of lack of clarity on what to do or how to formulate an executive strategy. This article is primarily for executives who are looking for help in formulating a strategy for achieving success with big data analytics in their operations. It provides guidelines to them plan an organization's short-term and long-term goals, and presents a strategy tool, known as the delta model, to develop a customer-centric approach to success with big data analytics.

Introduction

The idea of analyzing terabytes of data in under an hour was, for most people, unimaginable just a few short years ago. But, thanks to big data, it is a reality today. But what is big data? When trying to understand what big data is all about and how it helps any organization, the concept can be represented in two different ways: i) big data as a storage platform and ii) big data as a solution enabler.

As a storage platform, big data is a means of storing large volumes of data from a variety of sources in a reliable (i.e., fault-tolerant) way. For example, big data solutions can reliably store real-time data from sensors, RFID tags, GPS locators, and web logs, thereby enabling near real-time access to millions of users simultaneously.

As a solution enabler, big data offers distributed computing using a large number of networked machines to reduce the total "time-to-solution". For example, exploratory analysis on large volumes of credit card data to identify any signals of fraud, known as fraud detection, usually requires hours or even days to complete. But, with big data techniques, such complex and large computations are distributed across multiple networked machines all running in parallel, thereby reducing the total time it takes to arrive at a solution.

These two representations big data – as a storage platform and as a solution enabler – go hand-in-hand and lead to what is commonly referred to as “big data analytics”. Thus, big data analytics enables an organization to reliably collect and analyze large volumes of data. Furthermore, by being domain neutral, the applications of big data transcend verticals, meaning that these concepts can benefit all domains.

Some of the prominent examples where big data analytics have been used successfully by the author include:

- Usage based insurance: Why should an aggressive driver and a decent rule-abiding driver pay the same amount of insurance premium? What if a good driver could receive discounts on their insurance as an incentive for following safety regulations that not only save lives but also reduce the driver's total carbon footprint? Usage-based insurance implements that concept by calculating the insurance amount based on the actual usage behaviour and not on preset calculations, made possible by monitoring the driver behaviour and providing incentives to the driver based on their driving habits, including even infrequent hard braking or sudden acceleration. As will be discussed in this article, big data’s cloud-storage and stream-processing architecture makes this scenario possible.

- Predictive maintenance: What if fleet managers knew beforehand how many of their vehicles were going to break down, say, in the next 100 days? And further, what if they not only knew how many vehicles, but they also knew which exact vehicles were going to break down and with which exact failure reason? Can they make alternative arrangements and save extra labour costs and repair costs and improve productivity? Thanks to big data analytics, it is all possible. Once again, the stream-processing architecture described in this article can be used to predict machine component availability and minimize downtime.

- Epidemic outbreak detection: What if public health officials could analyze disease-causing factors and detect epidemics in real-time before they can spread out of control? One of our recent case-studies on public health data lead us to model the disease-causing factors and create an epidemic outbreak detection mechanism, all of which was made possible through the real-time stream-processing architecture for big data.

- Sentiment Analysis: Retailers thrive on capturing market share with promotions, discounts, and sales leads. But often the voice of the customer is lost somewhere in the social media feeds and the real sense of "what works and what does not?" and "who is the potential customer and who is not?" is left uncaptured. What if an organization could capture all the social media data and monetize all the intentions to buy? What if they could gain unprecedented levels of insight into what exactly the customers are thinking about their products and which one of their competitors’ products are stealing their market share? Text-mining algorithms applied to large social feeds make this possible when facilitated by big data analytics facilitates.

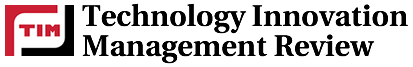

This list is by no means exhaustive, but each example draws upon the approach to big data analytics described in this article and depicted in Figure 1. And, these examples illustrate why big data analytics is one of the most prominent opportunities to emerge into mainstream computing in recent years. It promises easy adaptation "straight out of the box" to almost all sectors, such as healthcare, banking, retail, manufacturing, and so on, making it a very interesting opportunity for both information technology (IT) service providers and non-IT service consumers.

The cost-saving potential and new revenue opportunities big data analytics promises for businesses is another driving factor for its adoption. For example, a few of my own clients from the automotive domain that implemented big data analytics to manage their spare-part inventory and work-labour schedules based on condition-based monitoring and predictive maintenance in the recent years have reported an average of approximately 25% lower maintenance costs and 75% less machine downtime, along with overall productivity increases of 25% due to predictable work schedules and work-life balance. Similarly, customers from the retail and finance sectors are seeing new opportunities to gain customers through big data social media analytics and advanced recommendation engines capable of profiling and analyzing customers’ shopping behaviours in real time. All these innovative cost-saving and revenue-generation opportunities are encouraging solution providers to include big data analytics in their service and product portfolios.

Although the general approaches to analytics have become familiar to most executives, the integration of analytics with big data presents new challenges. The key challenge is that this integration must occur in two places: i) with the real-time streaming data and ii) with the persistent historical data. Analytics then uses one or both of these datasets depending on the nature of the problem being solved and the depth of the solution.

Figure 1. A typical schematic of a big data analytics solution

For example, as shown in Figure 1, real-time streaming data collected from vehicles (e.g., to analyze driver behaviour) or shopping carts (e.g., to analyze the shopper behaviour) or patient health records (e.g., to detect epidemic outbreaks), is usually processed against a pre-stored historic profile data containing information such as other drivers’ profiles, shoppers’ profiles, disease-factor profiles, etc. The historic data is often large in volume, ranging from terabytes (1012 bytes) to petabytes (1000 Terabytes) based on the domain in question, and resides in a reliable cloud storage that is readily accessible across all data centres.

Processing the real-time data to identify any patterns similar to patterns in historic data is achieved through what is called stream processing. During stream processing, algorithms known as complex event processing (CEP) engines crunch the data that is streaming in real time to detect observable patterns of anomaly or significance in relation to the old data. However, sometimes, the old data may not be in a ready-to-use format (e.g., missing data points or un-normalized data set) and hence, has to be pre-processed before it can be used in the stream processing. This problem is resolved by having a dedicated analytics-processing unit that runs alongside the cloud storage, taking care of scheduling batch processes at regular time intervals to ensure all the data collected is pre-processed correctly and is in a readily usable state for the real-time stream-processing calculations.

The results of the stream-processing algorithms are then converted into statistical scores for computing a numeric index that can point to an actionable business insight, such as the eligibility of a driver for insurance based on an assessment of risk, recommendations or discounts for shoppers, alerting health administrative departments, and so on. The results are also stored in the cloud storage to act as the historical data for future data that comes in later.

A central administrative command centre will keep track of the whole operation to ensure operational compliance, and also take care of any alerts, such as taking actions to implement quarantine measures in case of epidemic outbreak signals, sending repair personnel to the breakdown spot in case of any machine or vehicle breakdown, etc. A well-designed big data analytics platform also allows remote administration capabilities, in addition to the centralized command centre, to allow the concerned personnel to be notified about any important alert or event no matter where they are, through the use of a mobile short message service (SMS) or other similar techniques, and let them take corrective action in real time, thereby reducing the total time to respond.

Big data architectures such as these have been proven to work reliably in a wide range of business cases irrespective of the domain, and they are the most basic setup required for any organization dealing with big data analytics. In the following sections, we discuss how to build such executive capabilities into their organization so that they can build similar architectural models and tools into their own operations, and how they can implement the required strategy using a customer-centric approach. Next, guidelines on laying out the short-term and long-term goals are presented, followed by competency-measure criteria to evaluate what it means to be successful in this big data analytics field. We conclude the article with a few remarks on some of the pitfalls to watch out for when implementing these techniques.

The Vision

A typical vision statement for any big data analytics organization or division would be: "to become established as the leading big data analytics solution provider in the industry". However, there is one primary challenge that needs to be resolved before such vision can be realized. Although big data analytics transcends verticals in scope, with applications to almost all sectors ranging from automotive to retail to energy and utilities, operators in the respective sectors usually lack sufficient knowledge of its usage or benefits. Thus, many organizations have become aware that they need big data, but they do not know exactly what they need it for.

This gap between an organization's perceived need for big data analytics and its level of understanding about the domain creates a unique situation where the solution providers are now responsible for thinking about the requirement for the customers, instead of customer coming up with their own requirements as happens in traditional projects. This situation puts extra burden on the providers, because they have to provide not only solutions, but also the problems!

Even if the big data analytics solution provider somehow understands the customers’ needs and comes up with a solution, there is no guarantee that the existing methodologies or solutions in place for the customer are compatible with big data solutions. Most of the operators in the field still use traditional systems and databases that are geared towards traditional processing and that are not suitable for real-time analytics or large-scale data processing. The cost and effort of integration alone can turn away many customers from embracing any kind of big data solution.

Such a challenge requires solution providers to educate their customers on the applications of big data analytics to their respective domains and provide solutions that are easy to integrate into their existing infrastructure. Thus, in the initial stages, an organization's focus should be on the solution enablers – either building solutions in-house or adapting open source solutions, such as: i) a stream-processing framework that enables customers to rapidly adapt their existing infrastructure for real-time analytics, and ii) an Internet of Things (IOT) platform that enables solutions for cloud storage and big data analytics, seamlessly bridging the gap between their existing systems and big data solutions.

However, owning the solution enablers is just a small step towards building a foundation, and it alone cannot make the vision statement come true. A full and solid foundation has to be built upon and followed-up with medium- and long-term strategy goals to realize the grand outcome. The subsections that follow illustrate a sample set of short-, medium-, and long-term goals and the steps to be taken to realize each of these goals. Short-term goals are aimed at laying out the technology foundation and building a strong customer base for sustainable revenue generation, whereas the medium-term goals strive to support the delivery functions for retaining the acquired customer base and reinforcing the customer bond with high-quality outputs and optimal schedules. Long-term goals are aimed at leading the market with innovative solutions and strategic partnerships.

Short-term goal: Lay the foundation

The following immediate activities focus on establishing the foundation upon which solutions will be built:

- Platform building: The stream-processing and Internet of Things platform should act as the foundation for big data analytics solutions to be built upon for customers from various segments. It should encompass complete end-to-end workflow starting from real-time event capture to end-user analytics and cloud storage in a demonstrable form to clients. Targeted list of customers should be used for marketing campaigns and workshops to showcase the platform capabilities in a way that is customized to their needs. Proposals for a proof of concept also should be developed.

- Competency building: Big data analytics is a cross-domain endeavour, and competencies need to be built for various domains for which solutions are being targeted. Competency building should focus on filling the gaps between the customer requirements and resource competencies on identified verticals. This task primarily involves increasing the analysts' comfort level with the big data technologies and the platform workflow. The integration between the two technologies happens at this stage, and analysts should proactively build customer solutions in a demonstrable form while working closely with the big data platform leaders, and the big data teams should take the analysts' feedback into account when planning platform improvements.

Short-term goal: Market penetration

Other immediate actions should focus on market penetration by improving the customer base and reach of solutions with pro-active solutions and promoted brand identity:

- Proactive solutions: Initial response time is one of the key factors for high customer satisfaction ratings. Proactively identifying customer requirements and planning the solutions ahead, improves initial response times enormously and gives the impression of thought leadership. To start with, use cases should be identified and solutions should be proactively built for one particular vertical (e.g., automotive, healthcare) that the firm knows well and, once reasonable customer foundation is achieved, activities for the remaining verticals can be slowly expanded. Of course, if the organization is well established with enough resources and budget, it is also possible to start with multiple verticals in parallel, though in such cases, accountability and success tracking becomes a major and unnecessary burden. It is always suggested to start with identifying a core field of skill and then expand, rather than trying to tackle them all at the same time. Table 1 lists solutions that can be built for each different vertical and can be used as a starting point.

- Brand name promotion: Brand loyalty often dictates market penetration and customer reach. Webinars, whitepapers, and research articles are a good way to expand the customer reach: they not only educate the customers but also promote brand name and associate leadership status to brand identity. Brand identity and customer education can be enriched by cultivating a publication culture among engineers. Organizations should also incorporate knowledge triage systems and encourage open knowledge sharing among teams both internally and externally, where possible. By putting their people first, creating an identity for them, and making them leaders, companies become identified as leaders in the market.

Medium-term goal: Architectural standardization

Organizations can improve the quality of the solutions and shorten the time to market through architectural standardization, as follows:

- While developing cross-vertical solutions, recurring problem patterns should be identified and reusable tools and middleware frameworks should be created.

- Variety in data is one of the main challenges for big data when dealing with cross-vertical solutions. In such scenarios, schema-neutral architectures capable of supporting dynamic ontologies should be designed and used for establishing standards.

- Best-practice guidelines should be widely published and enforced among all teams to standardize the offerings and improve the solution quality.

- Big data technologies are vast in scope, starting from large-volume data storage to real-time, high-velocity streaming and analytics. A culture of subject matter experts should be promoted and efforts should be made to increase the pool of specialist talent. These subject matter experts should be held accountable for the quality of solutions their respective teams deliver.

Long-term goal: Drive the leadership message

The organization's long-term goals should be aimed at establishing a leadership position in the market:

- Big data technologies are still evolving and their integration with analytics platforms remains challenging. There is an urgent need for research on creating more seamless integration possibilities. Any organization that takes the lead in such research and produces viable options is bound to become a de facto integration leader.

- Organizations should promote internal architectural practices and best-practice guidelines as industry standards. New optimized protocols for low-latency, real-time near-field communications are good examples of opportunities in the big data standards arena, which can serve as architectural best practice guidelines. Companies that promote and drive these standards in the initial days of the big data evolution can become established as industry leaders.

- Partnerships should be sought with leaders in various segments and open challenges should be identified. Success in big data analytics requires strong cooperation between big data technology experts and leaders from various customer segments.

- By developing innovative solutions for the identified open challenges, organizations can lead their industry.

Table 1. Example list of big data analytics solutions for diffent verticals

|

Industry |

Solution |

|

Automotive |

Fleet management · Predictive maintenance · Optimal workforce scheduling and inventory control Eco-routing · Sensor-based traffic monitoring · Emergency response and passenger safety |

|

Healthcare |

Public health · Real-time disease progression monitoring and epidemic outbreak detection · Healthcare cost predictions based on living conditions and dietary habits Clinical decision support · Health information exchange with electronic health records · Diagnostic assistance |

|

Retail |

Real-time asset tracking Supply chain monitoring |

|

Finance |

Usage-based insurance Real-time fraud detection Credit-score modelling |

|

Energy/Utilities |

Smart-grid usage prediction and dynamic load generation based on smart sensors Real-time monitoring of operational metrics for failure prediction |

The Strategy

Clearly stated business goals lie at the centre of any successful organization. But what defines success? How should an organization be measured on its achievements? Typically, an organization is judged based on the quality of its "4Ps": people, partners, processes, and products. Although people and processes are internal to organizations, partners and products are external indicators of success, and more often than not, they serve as cross-comparison criteria. For organizations dealing in big data analytics, there are three broad categories of such comparison criteria:

- Current offerings

- Solution architectures

- Data handling capabilities

- Discovery and modeling tools

- Algorithms

- Model deployment options

- Lifecycle tools

- Integration capabilities

- Support for standards

- Solution strategy

- Licensing and pricing

- Resources dedicated to the solutions

- R&D spending

- Ability to execute the strategy

- Solution roadmap

- Market presence

- Financials

- Global presence

- Client/customer base

- Partnership with other vendors

Based on the span of operations fulfilled from the above criteria, the capabilities of solution providers are broadly categorized into three levels, which progress with increasing complexity and indicate the maturity of an organization in being able to deliver solutions around analytics:

Level 1. Data analysis services

- The customer provides data and pays for analysis insights derived from that data.

- The insight outputs delivered to the customer are not reusable and are valid only for the particular dataset provided.

- If there is new data, the customer has to use the service again (and hence pay) for insights on the new data.

- The customer will not be aware of the tools used or methodologies applied in deriving the insight.

- There is no lock-in. When new data becomes available, the customer is free to choose any other service provider.

Level 2. Model-building services

- The customer provides a business problem and a sample dataset related to that problem, and pays for a model that solves the problem.

- The model output delivered to the customer is reusable for different datasets for the same problem.

- If there is a new business problem, the customer has to use the service once again (and hence pay) for new models that can solve the new problem.

- The customer will be somewhat aware of the tools used and methodologies applied, given that the model will be deployed onto customer systems and their staff will be trained to use it with different data.

- The customer is locked in only for the duration of the model validity. For new business problems, the customer is free to choose other providers for model building.

Level 3. Expert systems production

- The customer defines the business nature and pays for expert systems that can build models of any business problem that can possibly arise in the course of stated business operations.

- The expert system delivered to the customer is reusable for the lifetime of the business.

- The expert system will be capable of solving not only the present business problems stated explicitly by customer, if any, but will also be capable of predicting potential future problems and helping alleviate them even before they happen.

- Based on the licensing criteria, the expert systems delivered to the customer also would be capable of being applied to other business domains because the domain knowledge is separated from business data right in the architecture.

- The customer will have to be fully aware of the modelling techniques and methodologies to be able to derive most benefit out of the delivered expert system.

- The customer is locked in for the lifetime of their business. Switching providers is not easy or feasible.

Leaders in predictive analytics solutions are expected to offer a rich set of algorithms to analyze data, architectures that can handle big data, and tools for data analysts that span the full predictive analytics lifecycle. This diversity of offerings is achieved through competency building, architectural standardization, research drive cultivation, and other strategic drives, as presented below:

- People

- Competency building

- Recognition and proliferation of subject matter experts

- Research drive cultivation

- Processes

- Architectural review processes

- Best practice guidelines

- Reusable frameworks and tools for standardizing solutions

- Partners

- Educate customers on what is possible with big data analytics

- Bridge the gap between existing customer solutions and big data requirements

- Market penetration by laying out new industry standards

- Brand name promotion with whitepapers, blogs, research articles, and webinars

- Products

- Reduce the initial response time with proactive solution approaches

- Lead the pack by researching and implementing solutions for open challenges

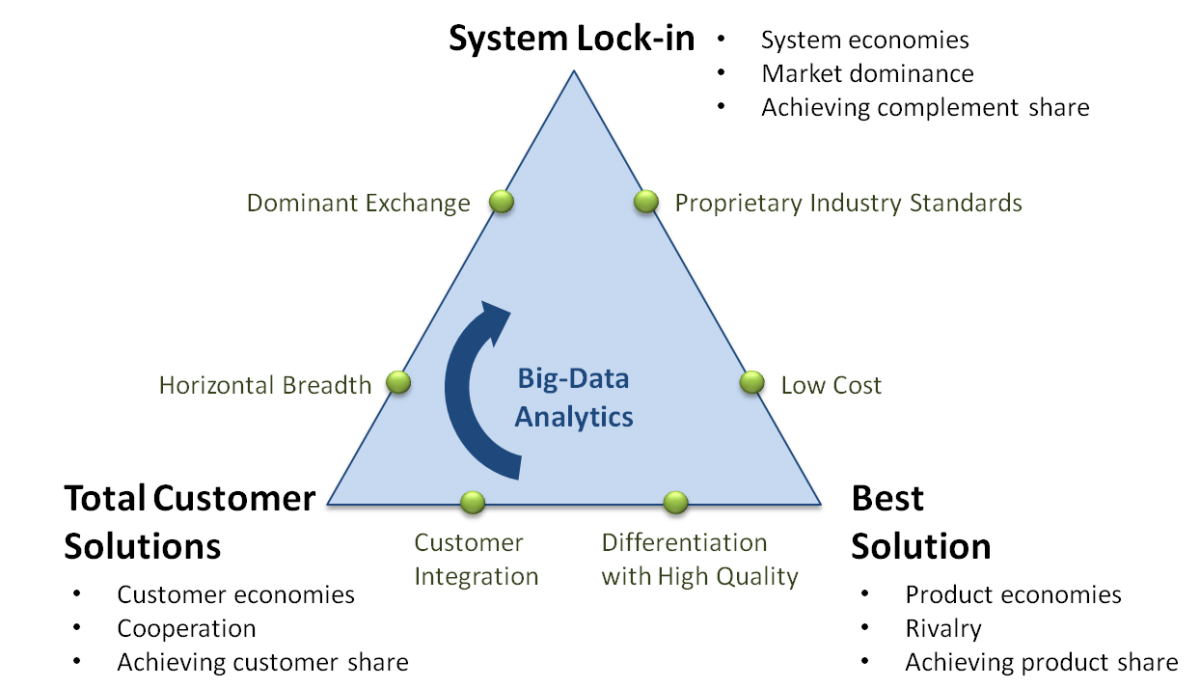

The grand strategy that encompasses all these activities can be summarized, for brevity, as a three-point triangle known as the delta model (Figure 2). The three options represented in the triangle are the milestones for the strategic vision. The strategy starts by aiming for the first point, at the right-hand side of the triangle, the Best Solution positioning.

Figure 2. Strategy for the big data analytics business drive

Best Solution positioning

This positioning aims to become the best solution provider in the market. It acts as the base for attaining sustainable revenue for targeting next positions and instills a brand-name presence in the market with reasonable customer base. This, however, cannot be the final position for various reasons:

- The position is rather inward and narrow, based on the prevailing product economics. Frequently the solutions are standardized and only restricted to Level 1, and the customers are faceless.

- The way to attract, satisfy, and retain the customer is through the inherent characteristics of the solution itself. Quality of insights delivered and quick turnaround time are what make the customers come back.

- Yardsticks for success at this level are the relevant competitors that the organization is trying to surpass or equate.

- Commoditization is a real threat and is often an unavoidable outcome, because there is not much scope for innovation or creativity at this level; delivering Level 1 insights is vulnerable to imitation.

- The measure of success is product share, which ultimately can fragment the business activities into a set of solution or product offerings.

Total Customer Solutions positioning

In the left-hand side of the triangle sits the option of Total Customer Solutions, which represents a 180-degree departure from the Best Solution positioning. In this phase, rather than selling standardized and isolated services/products to depersonalized customers, the organization will be providing Level 2 solutions consisting of a portfolio of customized products and services representing unique value proposition to individualized customers. This positioning improves the customer bonding and provides a continuous stream of revenue to enable experimentation, as follows:

- Instead of acting alone, the organization engages the relevant set of partners that constitute the extended enterprise.

- The relevant overall measure of performance becomes the total customer share.

- Not limited by the internal product development capabilities, the joint efforts become the key success factors, such as contributing to the open source big data frameworks and driving their proliferation.

- Although this position is relatively safe, with reasonable customer lock-ins, it is not safe enough given that the competitors are not locked-out yet and they can still take away the customers with better offerings.

System Lock-in positioning

At the top of triangle stands the most demanding strategic option that every organization craves for, the System Lock-in. In this stage, the organization will be addressing the full customer network as the relevant scope, with gaining of complementor’s share as the ultimate objective, and the system economics as the driving force, as follows.

- Those who are successful in reaching this position gain de-facto dominance in the market that not only assures a customer lock-in but also a competitor lock-out.

- Complementors play key roles, because they are the basis for consolidation of the success. For example, application developers are the complementors for Microsoft, who are not on the payroll of Microsoft but contribute to the success of its products. Similarly, Android developers contribute to the success of Android, and so on. A Level 3 expert system with open standards and third-party plugin application programming interface (API), for example, can provide such system lock-in.

The Best Solution strategy rests on the classical form of competition, which dictates that there are only two ways to win: either through low-cost provisioning or through high-quality differentiation. The problem, however, is that differentiation is seldom a source of sustainable advantage because, once the strategy is revealed and becomes publicly known, technology often allows a quick imitation that neutralizes the sought-after competitive advantage. In the big data analytics case, everyone has access to the same set of tools for building Level 1 solutions. The low-cost provisioning option does not provide much room for success either. After all, how low can one go and how many players can enjoy simultaneous low cost advantage?

The transformation toward a Total Customer Solutions positioning requires a very different way to capture the customer and a very different mindset. To achieve this shift, the organization has to engage three options that need to be pursued simultaneously:

- Segmenting the customers carefully, arranging them into proper tiers that reflect distinct priorities, and providing differentiated service to each tier based on the identified priorities. For example, customers looking for Level 1 analytics solutions cannot benefit from preferential treatment as much as those who are looking for Level 3 analytics solutions.

- Pro-actively identifying the challenges in the customer's business domain and proposing solutions to alleviate them even before they happen, thereby displaying thought leadership and gaining customer trust.

- Expanding the breadth and reach of the solutions to provide full coverage of services to the customers.

Once in the Total Customer Solutions position, the organization is left with the final, hard-to-reach positioning on the top of triangle: the System Lock-in. One powerful way to achieve this position is through the development and ownership of the standards of the industry, perhaps with open API and third-party solution compatibility.

Another way to achieve system lock-in is through dominant exchange strategy. For example, by designing the solution as domain-neutral and schema-invariant, the Internet of Things system will be capable of becoming a dominant data exchange platform, poised towards achieving a system lock-in strategic positioning in the long run, when envisioned and executed correctly.

Conclusion

The present-day challenges in big data analytics require solution providers to first educate their customers on the applications of big data analytics to their respective domains, and then provide solutions that are easy to integrate and amenable to their existing infrastructure. A good roadmap to get started in the big data analytics market includes short-term goals aimed at laying out the technology foundation and building a strong customer base for sustainable revenue generation, with medium-term goals striving to support the delivery functions for retaining the acquired customer base and reinforcing the customer bond with high-quality outputs and optimal schedules. The long-terms goals aim for leading the market with innovative solutions and strategic partnerships.

The delta model outlined in this article is a customer-based approach to strategic management. It is based on customer economies and, as such, is well suited for both established organizations and initial startups, because the emphasis is on achieving success by customer bonding, rather than on working against the competition. Implementing this delta model thus requires thorough understanding of the customer needs and openness towards partnerships. Big data technologies, at least in today’s world, thrive on open source efforts and hence are aptly suited for such a model of partnerships, where crowdsourcing and open development are the fundamental mode of business.

Some of the pitfalls one may encounter in implementing such a strategy, however, are: lack of customer interest in cooperation, limited partnership opportunities, and a scarce pool of capable technical resources. Especially for startup companies who are yet to establish their brand, the prime opposition towards any innovative big data analytics solution comes directly from the customers’ apathy towards such solutions. Skeptical and suspecting, many a customer today is not yet ready to share the business data with the big data analytics entrepreneurs. A question of security for their data on the open plains of big data cloud architectures is the major contributor for these suspicions on the customer's part. Adding to this is the scarcity of qualified technical resources capable of innovating on these big data technologies, making the companies treat the competitors more and more as opponents than as prospective partners. Such a heavy inward focus towards attracting, engaging, and retaining the customers and resources, is making the companies lose global focus, rendering them as just another group of disconnected functional silos. Until the technology platforms mature and become capable of resolving such customer security concerns, and until the technical resources become available in abundance, this situation continues to present both a challenge and opportunity for big data entrepreneurs.

Keywords: big data, business vision, executive strategy, IT entrepreneurship, predictive analytics

Comments

clearly figure out the strategy of executing Big Data

Good for reader to plan a big data execution strategy, thanks.

Great Article

One of the best articles on data/analytics strategy. Thank you!