AbstractI would argue that, however, intelligent machines may be made to be, there are some acts of thought that ought to be attempted only by humans.

Joseph Weizenbaum (1923–2008)

Computer Scientist

The increasing relevance of artificial intelligence (AI) applications in various domains has led to high expectations of benefits, ranging from precision, efficiency, and optimization to the completion of routine or time-consuming tasks. Particularly in the field of education, AI applications promise immense innovation potential. A central focus in this field is on analyzing and evaluating learner characteristics to derive learning profiles and create individualized learning environments. The development and implementation of such AI-driven approaches are related to learners' data, and thus involves several privacies, ethics, and morality challenges. In this paper, we introduce the concept of human-centered AI, and consider how an AI system can be developed in line with human values without posing risks to humanity. Because the education market is in the early stages of incorporating AI into educational tools, we believe that this is the right time to raise awareness about the use of principles that foster human-centered values and help in building responsible, ethical, and value-oriented AI.

Introduction

The relevance of artificial intelligence (AI)-supported systems in education, or AI in education (AIED), has increased dramatically in recent years, arousing great expectations and offering huge innovation potential across the entire education sector (EdTechXGlobal, 2016; Holmes et al., 2019). AI has significantly expanded traditional practices in education, while new digital solutions have emerged that are gaining a market share alongside of traditional concepts. Moreover, the use of AI technologies has begun to allow for sustainable change in education and knowledge transfer.

The aim of AIED is to develop adaptive, inclusive, flexible, personalized, and effective learning environments that complement traditional education and training formats (Luckin et al., 2016; Renz et al., 2020a). Popenici and Kerr (2017) defined AI, in the context of education, “as computing systems that are able to engage in human-line processes such as learning, adapting, synthesizing, self-correction and the use of data for complex processing tasks”. In addition, AI technology promises to provide deeper insights into learners' learning behaviours, reaction times, or emotions (Luckin et al., 2016; Holmes et al., 2019; Renz et al. 2020a). AI-driven tools can be categorized into two main areas: narrow AI/weak AI and general AI/strong AI. The former refers to an AI agent that is designed to solve one specific task, whereas the latter refers to an AI agent that is capable of solving multiple given problems irrespective of the task or domain. Almost all available educational tools comprise both narrow and general AI together, whereas building a solely general/strong AI is unlikely to exist even in the future (Zawacki-Richter et al., 2019).

Because the outcomes of these tools rely heavily on data produced in a specific task or domain, they affect people in several ways. For example, some are concerned about the use of private information, such as learner behaviours, abilities, and mental states while performing educational activities (Holmes et al., 2018). An increased need has therefore arisen to address the technological and societal implications associated with the emergence and use of AIED tools. An ongoing discourse continues about how to better operationalize the various values that arise during the development of AI systems, rather than only applying rules and guidelines after AI deployment.

In this paper, we introduce the “design-for-values” approach, which is based on a methodology aimed at incorporating moral values as part of technological design, research, and development. Developing AI systems entails processes such as identifying social values, deciding on a moral deliberation approach, and linking values to formal system requirements and concrete functionalities (Dignum, 2019). The questions that this research endeavors to answer concern social issues associated with the digitization of education through AIED tools, as well as the changes needed to be made to these tools so that people will accept them as useful and trustworthy. We therefore focus on how responsible AIED tools can be developed and operationalized in a people- or user-friendly way.

In this people-friendly effort, we present several aspects of value-centered, human-centered, ethical, and responsible AI in the domain of education, which in our view still remains underexplored. In the following literature review, we briefly outline current market developments in AIED and discuss AI applications that are currently used in educational technology (EdTech). Based on a conceptual analysis, we combine various HCAI approaches to suggest a new model of how AI technologies can be made increasingly transparent in educational contexts, in a way that can be purposefully adapted to human values for future developments.

AI in Education

Market development of AIED

Implementing AI technologies has high potential for innovation in several fields. In the educational sector, service and product providers are entering the market in increasing numbers. They are offering “intelligent learning solutions” through data-based and AI-driven approaches, such as decision trees, neural networks, hidden Markov systems, Bayesian systems, and fuzzy logic (Aldahwan & Alsaeed, 2020). Although AI-based EdTech applications are innovation rich for the business models of providers and users, still very few EdTech companies have implemented AI technology (Renz & Hilbig, 2020).

Thus, Renz et al. (2020a) have argued that the innovative potential of using AI-based elements in education already exists. The problem is that it often has only been used in a subjunctive role, thus yielding little practical evidence. A worldwide survey of stakeholders in the education sector showed that 20% of the surveyed EdTech companies had already invested in and implemented AI technologies, and another 21% were currently testing AI technologies in their businesses (Global Executive Panel, 2019).

In addition to this emerging innovation dynamic involving AI in EdTech companies, the current COVID-19 pandemic is leading towards a tipping point with faster market development. In a market analysis of two AI-driven EdTech applications, focused on language learning platforms (LLP) and learning management systems (LMS), Renz et al. (2020b) demonstrated that the COVID-19 pandemic has already caused a market shift from low-data business models to data-enhanced business models. The authors had assumed that the significant increase in the use of EdTech applications during the current health crisis would also lead to the market entry of more data-driven EdTech applications. The increasing number of users of EdTech applications has led to generating more data related to learning behaviours and outcomes. Such data provide a basis for further developing AI-based learning systems, in cycles of testing and iterating.

Additionally, we found that intelligent learning solutions on the market follow a principle of rule and content structure, i.e., the system performs a given task using logical reasoning. These methods are summarized under the generic term of symbolic AI (Haugeland, 1895). Holmes et al. (2019) noted that science, technology, engineering, and mathematics (STEM) subjects have played an important role in the development of such AIEDs. Among the most common AIED applications thus far are intelligent tutoring systems (ITS), which allow individualized learning paths in step-by-step tutorials (Alkhatlan & Kalita, 2018). One reason that STEM subjects are particularly suitable for ITS applications is because they usually have clearly defined rules and a well-structured approach (Holmes et al., 2019). Research has shown that EdTech companies must prepare for the development and use of AI technologies, such that they will need to accelerate their own existing innovation dynamics in servicing the education market in the near future.

Current AI applications in education

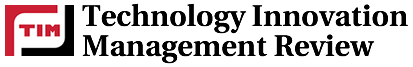

Ahmad et al. (2020) presented a bibliometric analysis of AI applications in education. The authors divided the field of AI applications in education into ITS, evaluation, personalized learning, recommender systems, student performance, sentiment analysis, retention and dropout, and classroom monitoring. Holmes et al. (2019) provided another overview of current AI applications in education. The authors classified four main types AIED applications: ITS, dialogue-based tutoring systems (DBTS), explorative learning environments (ELE), and automatic writing assessment (AWE). The following chart summarizes the most popular EdTech providers selected according to Holmes et al.’s (2019) classification.

Figure 1. Overview of current AIED applications (Holmes et al., 2019)

Whether an intelligent learning system operates based on individual learning data on behaviour or whether it is based on logical reasoning is not always known by the user. Nevertheless, it can be expected that an increasing number of EdTech applications will soon be developed based on AIED. It is therefore essential to establish appropriate regulations to ensure the responsible and sustainable development of such applications. Human-centered AI (HCAI) is one possible approach that holds promise for the responsible implementation of AI in education, including educational products and services.

Literature Insights on Human-centered AI

The theoretical concept

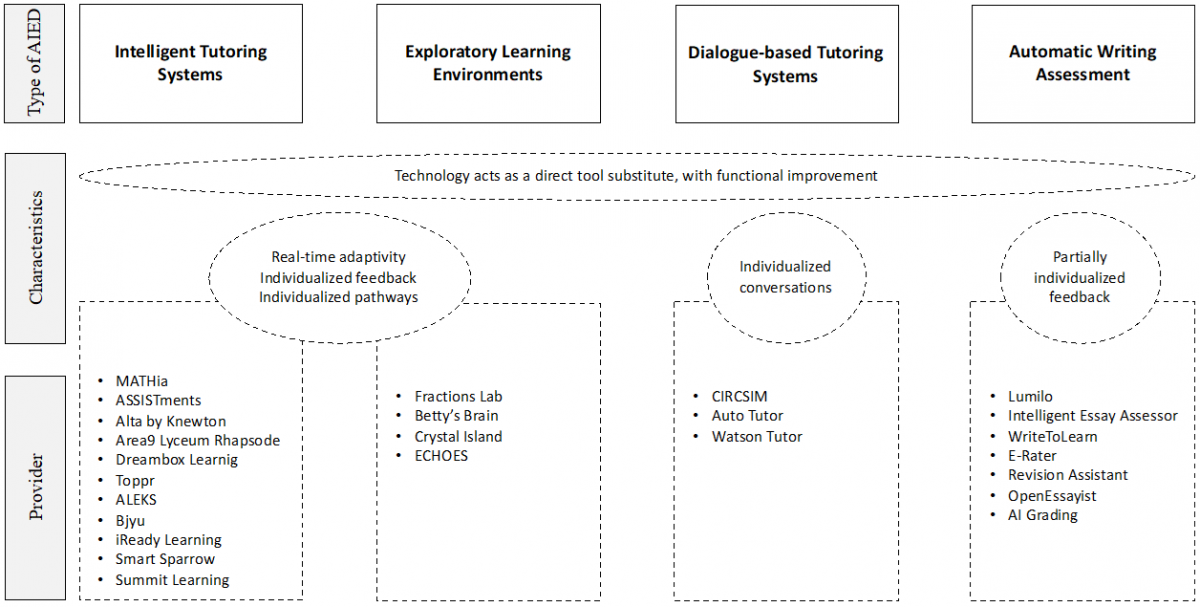

Many strands of public and scientific discourse assume that AI technologies will replace the human workforce in an increasing number of areas, thus making humans redundant as employees (e.g. Popenici & Kerr, 2017). Hence, many research projects, such as the European Humane AI project at the Stanford Institute for Human-Centered Artificial Intelligence, and other research institutes, such as MIT and UC Berkeley (Xu, 2019) have undertaken initiatives to work toward understanding the human aspects of AI, in order to develop a more responsible AI that enhances the capabilities of humans rather than aiming to replace them. Although there is no concrete definition of HCAI, the general understanding is that it is a design thinking approach that puts humans at the center of AI development, rather than considering AI automation as a replacement for human agency and control. Furthermore, HCAI “is designed with a clear purpose for human benefit while being transparent about who has control over the data and algorithms” (Schmidt, 2020). Shneiderman (2020) reframed AI as using algorithms to create systems with humans at the centre, thereby framing HCAI with great profundity as our contemporary version of a second Copernican revolution.

Figure 2. The transformative power of HCAI (Shneiderman, 2020)

In general, it remains unclear which areas of AI development already use HCAI approaches and which don’t make HCAI their focus. Nevertheless, various approaches to HCAI development in AI applications have been changed in different areas to achieve better user experiences. One example of implementing HCAI approaches for better user experiences is in the healthcare sector, where, with the help of AI, potential tumors can be identified by X-rays. This application enables radiologists to quickly focus on areas highlighted by the AI and provide targeted treatments for patients (Dembrower et al., 2020). Another growing example of HCAI use is in customer management systems. Some companies employ chatbots and digital agents to automate and streamline responses, which can lead to a less-than-ideal customer experience. The HCAI approach allows the designed (weak) AI system to help the human call center agent by identifying the right information that thereby speeds the answering process by providing better assisted customer experiences (Forbes Insights, 2020).

HCAI design and framework approaches

Despite increased AI implementations for education, not enough attention has been paid yet to the role of human values in developing AI technology. Some scientists have recently started working on design approaches that focus on human values (Auernhammer, 2020). Each of these design approaches provides a valuable perspective on designing for people. One approach called value-sensitive design (VSD) is a theoretically grounded approach to designing technology that accounts for human values in a principled and comprehensive manner. It provides diverse perspectives on society, personal interaction, and human needs in the design of computer systems, such as AI. Hence, the VSD approach provides an opportunity to research and examine through a particular lens the effects of AI on people (Himma & Tavani, 2008; Friedman et al., 2017).

Another solution that mitigates these challenges has been to follow a “design-for-values” methodological approach. This approach aims at making moral values part of technological design and development (Dignum, 2019). Values are often interpreted as high-level abstract concepts that are hard to operationalize in concrete technical functionalities. However, the design-for-values approach has the advantage of placing human rights, human dignity, and human freedom at the center of AI design. Using the design-for-values approach to design has assisted in building HCAI that helps identify social values, and make decisions with a moral deliberation approach (through algorithms, user control, and regulation), thereby linking these values to formal system requirements and concrete functionalities (Dignum, 2019).

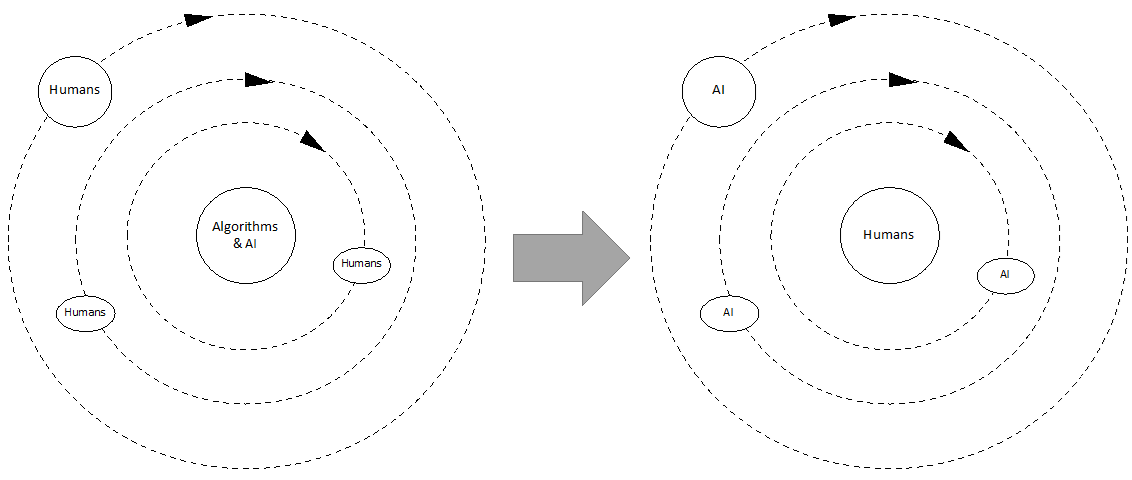

Xu (2019) proposed an extended HCAI framework (see Figure 3) that includes the following three main components: 1) ethically aligned design, which creates AI solutions that avoid discrimination, maintain fairness and justice, and do not replace humans; 2) technology that more fully reflects human intelligence, thus further enhancing AI technology to reflect the depth of human intelligence and character; and 3) human factors in design that ensures AI solutions are explainable, comprehensible, useful, and usable.

Figure 3. An extended HCAI framework (Xu, 2019)

Every day a wide range of initiatives are taken to establish ethical guidelines and frameworks, acting sometimes with quick solutions to address ethical, societal, and legal problems, in attempting to build socially responsible AI. For example, Algorithm Watch is a non-profit organization that helps point out ethical conflicts. It currently has 150 ethical guidelines for making algorithmic decision-making processes effective and inclusive (https://algorithmwatch.org). Similarly, the AI4People Ethical Framework offers a series of recommendations for developing and adopting AI. The framework is especially tailored to the European context (Floridi et al., 2018). In their meta-analysis of ethical frameworks, Floridi and Cowls (2019) found that almost all guidelines were based on the same set of principles and themes. However, missing was a concrete methodology for applying and mapping these principles in practice. In addition, the requirements and different levels of understanding about AI across disciplines have become increasingly diverse (Renz et al., 2020a).

Nonetheless, we believe that specific designs for HCAI approaches should be adapted to fit related needs. In the following sections, based on the results of a conceptual analysis, we will propose a framework that combines several HCAI approaches and ideas to derive a map of AI technologies in education and EdTech.

Research Methodology

This paper reports the results of a conceptual analysis that adopts a HCAI approach in the field of education and EdTech. Our conceptual analysis aims to extend a conceptual theory by either postulating a new relationship or establishing that an already known relationship exists between previously unrelated concepts or approaches (Kosterec, 2016). This form of analysis allowed us to introduce the HCAI concept to AIED thinking, as it was not considered in the original theory.

The main steps in the research project were as follows: based on an initial literature review, we identified the current relevance of AI applications in the EdTech market. We aimed to better understand the extent to which AI technologies were already being applied in education, as well as the specific subareas in which recent developments have been taking place. In this paper, the literature review concludes with a presentation of the HCAI approach, along with selected frameworks and designs. So far, few previous studies have followed this approach.

In the next step, we reflected on and combined HCAI approaches in the AIED field to shape a model that aims to structure and address relevant dimensions of the HCAI approach according to AIED applications. Our model intends also to help increase the transparency of AIED applications. For this purpose, we included human-centered dimensions such as trust that are relevant for AI development in the education sector. The results of our analysis showed that discussion involving HCAI approaches is still in its infancy. We believe it is therefore even more important to open the discussion to include new perspectives, which will then be verified in practice, during further research steps.

Results

A human-centered AI approach in education

As AI has gradually been adopted in education for the purpose of teaching and learning, debate has persisted on the educational value of the technology (Luckin & Cukurova, 2019). The fear that AI will make the role of teachers redundant has been offered as a main concern by both teachers and educational institutions (Popenici & Kerr, 2017). As a result of the uncertainty, progress involving AI technology together with learning analytics (LA) in education has lagged far behind other domains, such as healthcare and finance. The AI systems used currently in education enhance already existing technology by providing students with personalized lessons based on their learning patterns, knowledge, and interest in a field. However, ethical issues arise with this usage, as AI requires a large amount of learner data and sensitive information for model training. In addition, questions have been raised about how AI education systems could be theoretically and pedagogically sound (Chen et al., 2021).

We believe that learning technology should be human-centered because it aims at teaching and interactive activities. The HCAI approach now taking shape aims to enhance human capabilities, such as by allowing teachers to build their own computerized lessons using insights gathered from an AI tutoring system (Weitekamp et al., 2020). AI-supported learning environments therefore must not only focus on performance, but also human emotions and outcomes should be main concerns. Further discussion is thus required regarding not only ethics and norms, but also when exploring the effects of “smarter” learning environments on the current technological environment, including learning platforms, and learning communities (Yang et al., 2021). To the best of our knowledge, an HCAI approach has not previously been considered for developing AIED. In this paper, we present a model that uses HCAI approaches for developing and evaluating educational technologies with the intention of offering more transparency to providers and consumers regarding the impact of AI technology.

HCAI teaming model of education

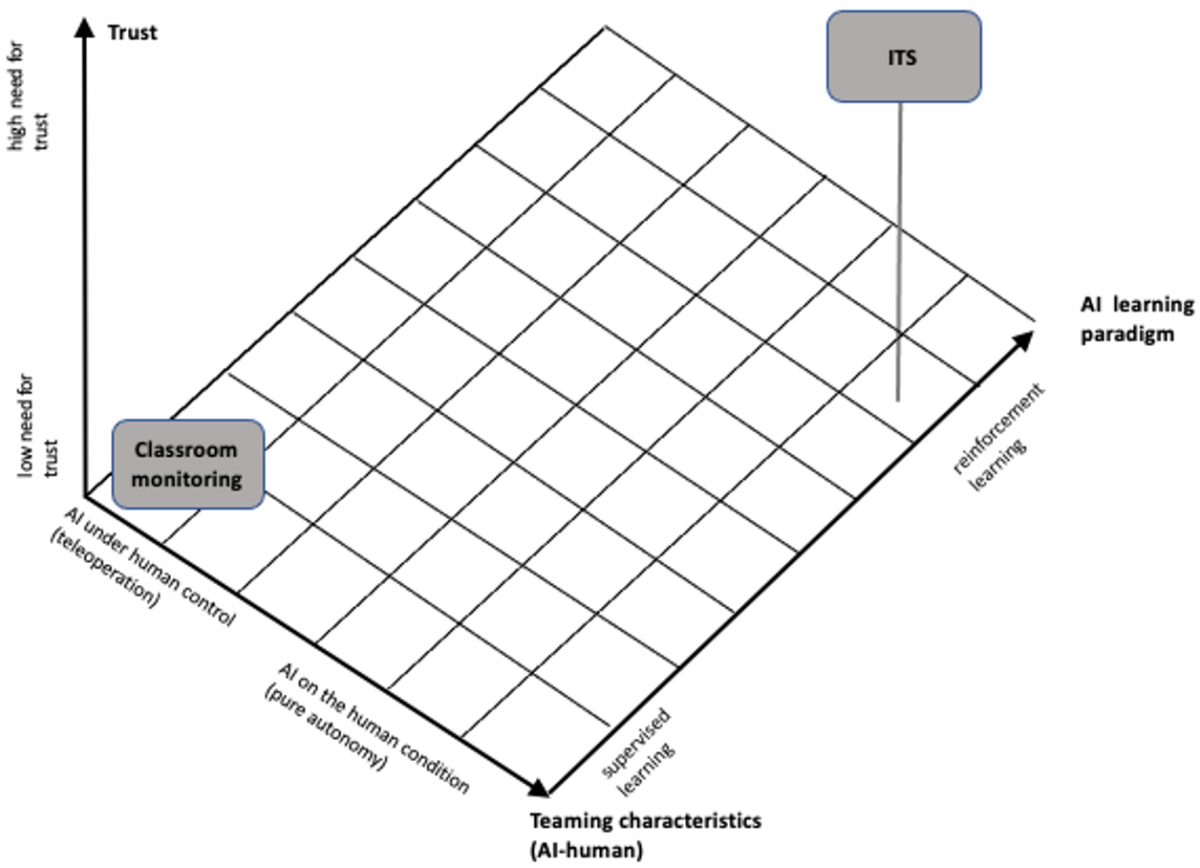

This section describes the model that was developed from two theoretical perspectives. The two perspectives refer to how HCAI can be interpreted: “AI under human control” (Shneiderman, 2020) and “AI under human conditions” (Stanford HAI, 2020). AI under human control is subject to judgment based on the degree of human control over AI. At one end, AI is fully controlled by humans, and it is used only to support automation. At the other end is autonomy, which is as fully determined as possible by AI. AI in the human condition is a type of reflexive judgment that refers to the design of AI algorithms with humans in mind. This type of AI demands computational and judgmental processes that can be explained and interpreted, as well as continuous adaptations of AI algorithms based on human contexts and social phenomena. We used these two perspectives — AI under human control and AI in the human condition — as a starting point for structuring AI applications for education according to HCAI (Ahmad et al., 2020). Furthermore, following Dubey et al. (2020), we interpreted the interactions between humans and AI as “teaming”. In the context of teaming, AI is not only intelligent enough to perform operations and analyze data, but also to work with humans according to predefined rules and structures. Dubey et al. (2020) used the idea of evaluating effective team collaboration between an AI and a human being to develop a taxonomy that captures AI–human teaming concepts. The main components are task characteristics, teaming characteristics, learning paradigms, and trust:

(1) Task characteristics include goals to be solved collaboratively, which can be common, adversarial, or independent; task allocation describes the performance of both people and AI, as well as the variety of roles that capture AI as personal assistant, teamwork-facilitator, associate, or collective moderator.

(2) Teaming characteristics relate directly to integrating people with AI assistants. These vary depending on the relationship between the two entities. They can be described, for example, as fully autonomous AI or with control over humans, as well as responsive to human intervention by volition, or when asked by a machine (i.e., “human-on-the-loop”). Teaming characteristics further describe aspects such as observability, predictability, and adaptability.

(3) The learning paradigm addresses both human learning processes (mental models) and AI learning, including supervised, semi-supervised, unsupervised, and reinforcement learning.

(4) Trust is considered vital in the teaming context. It is directly related to the concept of calibrated trust (people are aware of AI capabilities and can adjust their level of trust according to the situation) and interpretability (a person’s ability to interpret an AI’s behaviour).

We applied a teaming model to structure and address relevant aspects of HCAI and adapt them to the context of educational processes (Figure 4). To represent the task characteristics of AI in this specific context, we followed Ahmad et al. (2020), and proposed assigning the AI applications — ITS, evaluation, personalized learning, sentiment analysis, student performance, recommender systems, retention and dropout, and classroom monitoring — depending on characteristics of the other components of Dubey et al.'s (2020) framework. Figure 4 illustrates our approach using two applications as examples, which we considered to have opposite characteristics: ITS and classroom monitoring.

Figure 4. AI applications in education in the HCAI teaming model (authors' visualization)

Classroom monitoring combines the use of Internet of Things (IoT) devices and computational algorithms (for example, computer vision techniques, machine learning, and data analysis) in the classroom. The main goal is to support teachers in their primary tasks of monitoring and analysing students’ performances (Lim et al., 2017). Thus, Classroom Monitoring eliminates the need for direct, uninterrupted observation, and allows teachers to focus on the learning process. Similarly, data can be collected and then evaluated later. However, a teacher is always involved by making decisions and initiating changes in the functioning or observational focus of the "social machine" (Berners-Lee and Fischetti, 1999). Classroom monitoring can thus be described as an application that requires high human involvement, along with a low need for trust and supervised learning. Although room occupancy prediction is a longstanding problem, the use of advanced AI technology as a tool to measure or increase the efficiency of room utilization is a new issue. Ethical concerns or limitations arise from, among other things, observing classrooms over an extended period to analyze teachers' teaching methods and students' learning experiences (Raykov et al. 2016; Ahmad et al., 2020).

ITS aims at providing personalized instruction and feedback to learners often through AI technology and without a human teacher. This aim suggests that, traditionally, AI algorithms and systems have been developed with the notion of harnessing the efficiency of machine automation and optimizing the capability of AI systems. Therefore, our model uses ITS associated with a high degree of AI autonomy, as an unsupervised learning paradigm, with reinforcement learning and therefore a high need for trust. In contrast, we propose building EdTech solutions based on an appropriate HCAI design thinking approach that includes human values and measures human performance, while at the same time remaining amenable to personal feedback and agency that celebrates new human capabilities together with AI (Shneiderman, 2020).

Weitekamp et al.’s (2020) newly developed methods involve AI technologies that allow a teacher to teach an AI system (ITS) that then teaches students. With this AI classroom method, a human teacher demonstrates to the computer how to solve specific problems, such as multi-column addition. If the computer provides the wrong solution to the problem, it indicates to the human teacher potential areas of difficulty for students. This authoring process helps teachers understand students’ trouble spots because the machine learning system often stumbles at the same problems that students do. As we face uncertainties regarding whether to enhance machine capabilities or human capabilities, it seems to be the right time to rethink the development of AI systems that aim at satisfying educational purposes. Furthermore, HCAI thus becomes essential in ensuring that AI solutions responsibly prioritize human values and human dignity.

In our HCAI model for AIED, we align the dimensions of teaming characteristics with HCAI perspectives (Shneiderman, 2020; Stanford, 2020). Our model could help to structure and address relevant aspects of the HCAI approach according to AIED applications. Furthermore, our model addresses individual degrees in the development of teaming characteristics, learning paradigms, and trust, which aims to increase the transparency of AIED applications. Hence, we also considered Schmidt's (2020) argument that HCAI approaches should establish greater transparency of data and algorithms, which appears to be one of the first steps in reflecting on the use of HCAI approaches in EdTech.

Regarding existing and new AIED applications, our model provides a basic orientation about the degree to which AI technologies relate to human beings and where, if necessary, people should have greater influence on the design and autonomy over the technology. We should keep in mind that AI architecture cannot be fully incorporated into any such model, and thus our model only helps to provide an initial structure. How particular HCAI approaches are designed and implemented in individual EdTech applications could not be mapped in the model presented here.

Overall, we suggest that AI systems should not only keep humans “in the loop”, but also provide higher levels of human control where AI is created under human-centered conditions. HCAI systems should aim to lead to an increase in human performance that achieves higher levels of self-efficacy, mastery, creativity, and responsibility.

Conclusion and Outlook

In our research, we identified the EdTech community as being currently still in the early stages of incorporating AI into educational tools for the purpose of teaching and learning. Even though these AI tools in teaching and learning appear to have great potential, they are scarcely used in current educational institutions. One reason for restraining the development of AIED might be that people are, in principle, skeptical about using or developing AI systems due to often repeated dystopian framings of the concept of “AI”, which Dietvorst et al. (2015) described as algorithm aversion. Jussuopw et al. (2020) showed that the algorithm aversion phenomenon is influenced by various factors, though no investigation has yet looked at whether it is also present in the context of AIED.

Avanade's (2017) study on HCAI demonstrated that 88% of global business and IT decision makers stated that they do not know how to use AI, and 79% said that corporate resistance limits their implementation of AI. Due to this gap in innovation and development, we suggest that EdTech providers should consider developing more HCAI-based approaches to better realise the potential of AIED. This would allow us to more clearly envision the benefits that AI systems have to offer. We propose that now is the right time to consider value-conscious design principles in developing human-centered and responsible AI that addresses social, legal, and moral values prior to and during the technology development process.

In the current market, AIED systems work in one of two ways: 1) rule-based, where the system is given a set of rules and applies these rules to problems to find an answer; or 2) learning-based, where the system observes, finds patterns, and makes predictions independently. However, with the shift toward developing modern learning-based AI systems, concerns have emerged regarding AI that replaces human control, algorithmic violations caused by bad data, socioeconomic inequalities exacerbated by the technology divide, and privacy violations. The AI community has consequently shifted its focus to emphasize HCAI because of mixed public opinions about AI, such as those expressed by actors involved in education and government, as well as private entities, parents, and leaders of institutions.

We thus support the need to rethink how to develop an AI system that complies with human values without posing risks to humanity. Such a shift has not been noticeable because HCAI has the same capabilities as AI, with the only difference being that instead of replacing human workers, HCAI aims at augmenting human workers and enhancing business outcomes with improved human-machine interface. In this paper, we therefore described the nature of the human–AI relationship as “teaming”, and provided an initial framework for structuring relevant aspects of HCAI teaming according to AIED applications.

We believe that educating stakeholders about the potential and utopian capabilities of HCAI will help in attaining the common goal of student and teacher success, while reducing some of the anxieties and fears people have of AI systems. An increasing number of initiatives already exist at the public level (for example, Elements of AI in Finland and AI Campus in Germany), which provide information about the opportunities and potential, as well as challenges and risks of AI, thus raising awareness about the topic. In addition, data literacy initiatives have increasingly aimed to improve the general understanding of how data can be (mis)used. Such initiatives and educational projects will contribute to raising social awareness about AI technologies. Further research would benefit from analyzing cases of how HCAI approaches have influenced the development of AIED in the educational market and contributed to application readiness.

Acknowledgments

This research project was funded by the German Federal Ministry of Education and Research (Funding Number: 16DII126). The authors are responsible for the content of this publication.

An earlier version of this article was presented at ISPIM Connects Global 2020: Celebrating the World of Innovation - Virtual, 6-8 December 2020. ISPIM (ispim.org). The International Society for Professional Innovation Management (ISPIM) is a network of researchers, industrialists, consultants, and public bodies who share an interest in innovation management.

References

Ahmad, K., Iqbal, W., El-Hassan, A., Qadir, J., Bendaddou, D., Ayyash, M., & Al-Fuquaha, A. 2020. Artificial Intelligence in Education: A panoramic review. DOI: https://doi.org/10.35542/osf.io/zvu2n

Aldahwan, N. & Alsaeed, N. 2020. Use of Artificial Intelligent in Learning Management System (LMS): A systematic literature review. International Journal of Computer Applications, 175(13): 16-26. DOI: https://doi.org/10.1186/s41239-019-0171-0

Algorithm Watch, 2020. Available at: https://algorithmwatch.org

Alkhatlan, A. & Kalita, J. 2019. Intelligent Tutoring Systems: A comprehensive historical survey with recent developments. International Journal of Computer Applications, 181 (43): 1-20. DOI: https://doi.org/10.5120/ijca2019918451

Avanade. 2017. Human-centered artificial intelligence. An augmented workforce is your key to success. Available at: https://www.avanade.com/-/media/asset/point-of-view/artificial-intelligence-pov-new.pdf.

Auernhammer, J. 2020. Human-Centered AI: The role of human-centered design research in the development of AI. DRS2020: Synergy. DOI: https://doi.org/10.21606/drs.2020.282

Berners-Lee, T. & Fischetti, M. 1999. Weaving the Web: The Original Design and Ultimate Destiny of the World Wide Web by its inventor. Harper.

Dembrower, K., Wåhlin, E., Liu, Y., Salim, M., Smith, K., Lindholm, P., Eklund, M., & Strand, F. 2020. Effect of Artificial Intelligence-Based Triaging of Breast Cancer Screening Mammograms on Cancer Detection and Radiologist Workload: A retrospective simulation study. The Lancet Digital Health, 2(9): e468-e474. DOI: https://doi.org/10.1016/S2589-7500(20)30185-0

Dietvorst, B., Simmons, J.P., & Massey. C. 2015. Algorithm Aversion: People erroneously avoid algorithms after seeing them err. Journal of Experimental Psychology: General, 144(1): 114-126. DOI: https://doi.org/10.1037/xge0000033

Dignum, V. 2019. Responsible Artificial Intelligence: How to develop and use AI in a responsible way. [book series] Artificial intelligence: foundations, theory, and algorithms (AIFTA). DOI: https://doi.org/10.1007/978-3-030-30371-6

Dubey, A., Abhinav, K., Jain, S., Arora, V., & Puttaveerana, A. 2020. HACO: A Framework for Developing Human-AI Teaming. In Proceedings of the 13th Innovations in Software Engineering Conference on Formerly known as India Software Engineering Conference, 1-9. DOI: https://doi.org/10.1145/3385032.3385044

EdTechXGlobal 2016. EdTechXGlobal Report 2016: Global EdTech industry report: A map for the future of education and work. Available at: http://ecosystem.edtechxeurope.com/2016-edtech-report

Floridi, L., Cowls, J., Beltrametti, M., Chatila, R., Chazerand, P., Dignum, V., Luetge, C., Madelin, R., Pagallo, U., Rossi, F., Schafer, B., Valcke, P., & Vayena, E. 2018. AI4People an Ethical Framework for a Good AI Society: Opportunities, risks, principles, and recommendations. Minds and Machines, 28: 689-707. DOI: https://doi.org/10.1007/s11023-018-9482-5

Floridi, L. & Cowls, J. 2019. A Unified Framework of Five Principles for AI in Society. SSRN Electronic Journal. DOI: https://doi.org/10.2139/ssrn.3831321

Forbes Insights. 2020. How AI is Revamping the Call Center. Available at: https://www.forbes.com/sites/insights-ibmai/2020/06/25/how-ai-is-revamping-the-call-center/

Friedman, B., Hendry, D., & Borning, A. 2017. A Survey of Value Sensitive Design Methods. Foundations and Trends in Human-Computer Interaction, 11(2): 63-125. DOI:10.1561/1100000015. ISSN 1551-3955.

Global Executive Panel. 2019. Adoption of AI in Education is Accelerating: Massive potential but hurdles remain. Available at: https://www.holoniq.com/notes/ai-potential-adoption-and-barriers-in-global-education/

Haugeland, J. 1985. Artificial Intelligence: The very idea. Cambridge, Mass.

Himma, K. & Tavani, H. 2008. The Handbook of Information and Computer Ethics. John Wiley & Son Inc, New Jersey.

Holmes, W., Anastopoulou, S., Schaumburg, H., & Mavrikis, M. 2018. Technology-enhanced Personalized Learning: Untangling the evidence. Stuttgart: Robert Bosch Stiftung GmbH.

Holmes, W., Bialik, M., & Fadel, C. 2019. Artificial intelligence in education: Promises and implications for teaching and learning. Boston: Independently published.

Jussupow, E., Benbasat, I., & Heinzl, A. 2020. Why are We Averse Towards Algorithms? A comprehensive literature review on algorithm aversion. Research Papers, 168. Available at: https://aisel.aisnet.org/ecis2020_rp/168

Kosterec, M. 2016. Methods of Conceptual Analysis. Filozofia, 71(3): 220-230.

Lim, J.H., Teh, E.Y., Geh, M.H., & Lim, C.H. 2017. Automated Classroom Monitoring with Connected Visioning System. Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), 386-393. DOI: https://doi.org/10.1109/apsipa.2017.8282063

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L.B. 2016. Intelligence Unleashed: An argument for AI in education. Pearson, London.

Luckin, R. & Cukurova, R. 2019. Designing Educational Technologies in the Age of AI: A learning sciences-driven approach. British Journal of Educational Technology, 50. DOI: 10.1111/bjet.12861

Popenici, S.A.D. & Kerr, S. 2017. Exploring the Impact of Artificial Intelligence on Teaching and Learning in Higher Education. Research and Practice in Technology Enhanced Learning, 12(1). DOI: https://doi.org/10.1186/s41039-017-0062-8

Raykov, Y., Ozer, E., Dasika, G., Boukouvalas, A., & Little, M. 2016. Predicting Room Occupancy With a Single Passive Infrared (PIR) Sensor Through Behavior Extraction. Mathematics, Engineering & Applied Science, Systems Analytics Research Institute, 1061-1027. DOI: https://doi.org/10.1145/2971648.2971746

Renz, A. & Hilbig, R. 2020. Prerequisites for Artificial Intelligence in Further Education: Identification of drivers, barriers, and business models of educational technology companies. International Journal of Educational Technology in Higher Education, 17(1). DOI: https://doi.org/10.1186/s41239-020-00193-3.

Renz, A., Krishnaraja, S., & Gronau, E. 2020a. Demystification of Artificial Intelligence in Education: How much AI is really in the educational technology? International Journal of Learning Analytics and Artificial Intelligence in Education, 2(1): 14. DOI: https://doi.org/10.3991/ijai.v2i1.12675.

Renz, A., Krishnaraja, S., & Schildhauer, T. 2020b. A New Dynamic for EdTech in the Age of Pandemics. Paper presented at ISPIM Innovation Conference - Virtual, June 2020.

Schmidt, A. 2020. Interactive Human-centered Artificial Intelligence: A definition and research challenges. International Conference on Advanced Visual Interfaces (AVI ’20), September 28-October 2, 2020, Salerno, Italy. ACM, New York, NY, USA. DOI: https://doi.org/10.1145/3399715.3400873

Shneiderman, B. 2020. Human-Centered Artificial Intelligence: Three fresh ideas. AIS Transactions on Human-Computer Interaction, 12(3): 109-124. DOI: 10.17705/1thci.00131

StudySmarter, 2020. Available at: https://www.studysmarter.de

Weitekamp, D., Harpstead, E., & Koedinger, K. 2020. An Interaction Design for Machine Teaching to Develop AI Tutors. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems (CHI '20). Association for Computing Machinery, New York, NY, USA, 1-11. DOI: https://doi.org/10.1145/3313831.3376226

Xu, W. 2019. Toward Human-Centered AI: A perspective from human-computer interaction. Interactions, 26(4): 42-46. DOI: https://doi.org/10.1145/3328485

Yang, S., Ogata, H., Matsui, T., & Chen, N. 2021. Human-Centered Artificial Intelligence in Education: Seeing the invisible through the visible. Computer and Education: Artificial Intelligence, 2, 100008. DOI: https://doi.org/10.1016/j.caeai.2021.100008

Zawacki-Richter, O., Marín, V.I., Bond, M., & Gouverneur, F. 2019. A Systematic Review of Research on Artificial Intelligence Applications in Higher Education: Where are the educators? International Journal of Education Technology in Higher Education, 16(1). DOI: https://doi.org/10.1186/s41239-019-0171-0

Keywords: artificial intelligence, design for value approach., educational technology, human-centered AI, intelligent tutoring systems